India’s March 2026 AI Rules: The 3-Hour Takedown & Safe Harbour Guide

India’s 2026 IT Rules Amendment and the Shift in AI Governance

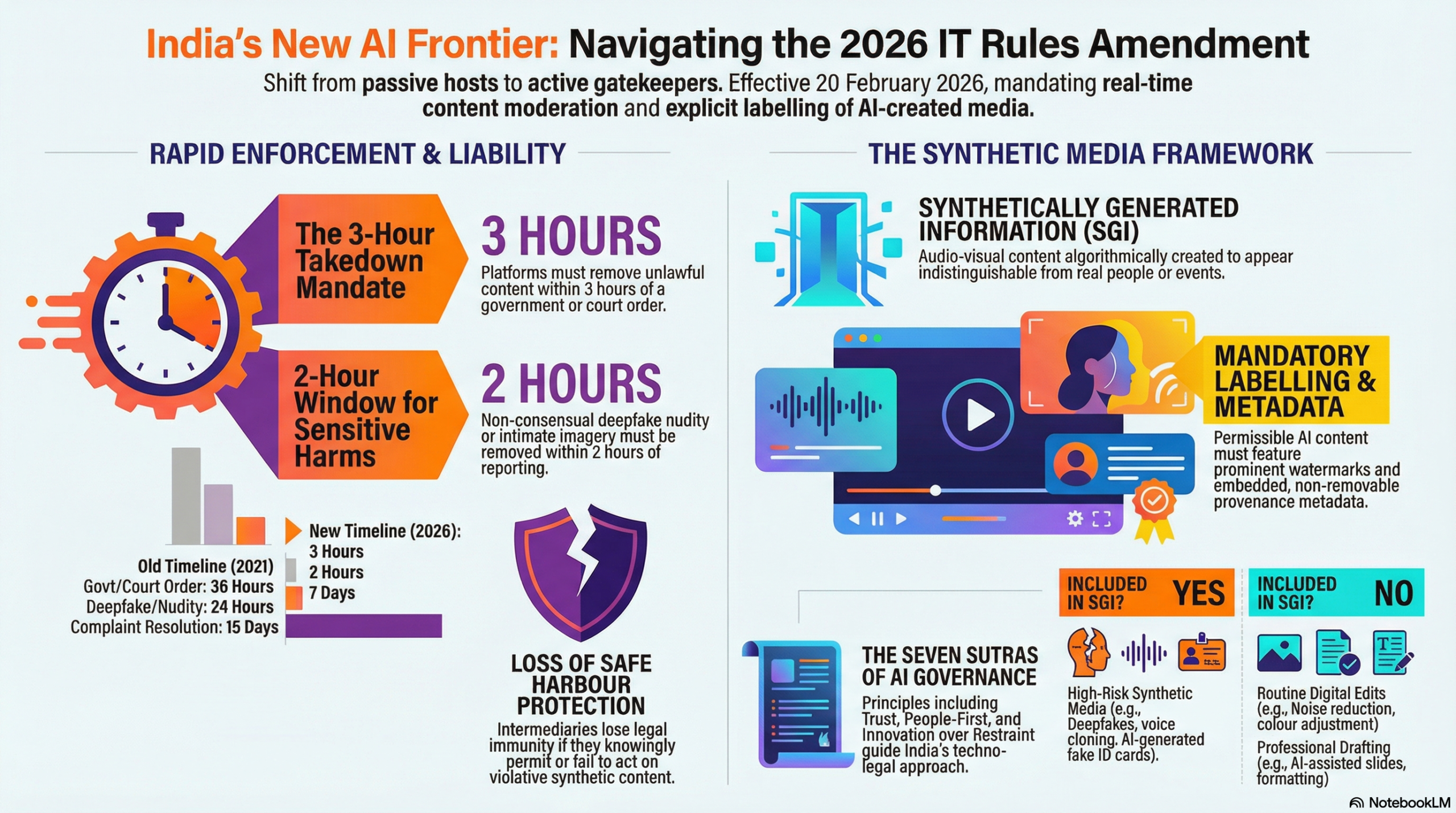

On February 20, 2026, something subtle but seismic happened in India’s digital ecosystem. The comfortable doctrine of “passive hosting”, the long-standing shield platforms leaned on, effectively dissolved. That was the day the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2026 came into force, after being notified by the Ministry of Electronics and Information Technology (MeitY) on February 10, 2026, under G.S.R. 120(E).

For years, many intermediaries operated in a largely reactive posture: respond to complaints, take down what’s flagged, move on. That model is no longer enough. The 2026 framework replaces reactive moderation with something more muscular, a proactive, technically embedded compliance architecture. It doesn’t just suggest vigilance; it structurally requires it. Whether you are a startup building generative tools, a global social media platform, or an enterprise deploying AI at scale in India, the ground beneath you has shifted.

At the centre of this shift is a recalibration of intermediary liability. This is not a cosmetic update to the IT Rules, 2021. It’s closer to a redefinition of what it means to “host” content in the age of synthetic media. The 2026 Amendment Rules, published in the Gazette of India Extraordinary, introduce an entirely new legal category-Synthetically Generated Information (SGI)-and then wrap it in obligations, deadlines, and liability triggers that leave very little room for ambiguity.

And this didn’t happen in isolation. The amendment sits squarely within India’s broader national ambition, Viksit Bharat 2047. The aspiration to be a fully developed nation by the centenary of independence is not merely economic; it is technological and institutional. AI, in this vision, is both amplifier and risk surface.

Union Minister Ashwini Vaishnaw’s remarks at the World Economic Forum in Davos in January 2026 made this clear: India’s regulatory posture is explicitly “techno-legal.” Law alone is insufficient; technical safeguards must do part of the governing work. Deepfakes, bias, and impersonation cannot be addressed by statute books alone. They require architecture.

The 2026 Amendment Rules are the most concrete articulation of that philosophy so far. They represent a decisive moment: AI governance in India is no longer aspirational, no longer advisory, and certainly no longer optional.

What Is Synthetically Generated Information (SGI) Under India’s 2026 AI Regulatory Framework?

If the 2026 Amendment Rules have a beating heart, it is the formal definition of Synthetically Generated Information (SGI). Everything else, including the takedown clocks, the labelling rules, the compliance burdens, flows outward from this one carefully drafted paragraph.

According to the updated IT Rules text published by MeitY, SGI means:

“audio, visual or audio-visual information which is artificially or algorithmically created, generated, modified or altered using a computer resource, in a manner that such information appears to be real, authentic or true and depicts or portrays any individual or event in a manner that is, or is likely to be perceived as indistinguishable from a natural person or real-world event.”

Read that slowly. It’s precise and almost surgical.

The law isn’t concerned with “AI” in the abstract. It’s focused on something more specific and more dangerous: synthetic media that convincingly impersonates reality. Deepfake videos and voice clones that mimic a CEO’s cadence or a politician’s tone. AI-generated photographs that put real people in situations they were never in. Algorithmically altered recordings that blur the line between fact and fabrication.

It covers audio, visual, and audio-visual. The full spectrum of sensory persuasion, and then interestingly, it draws a boundary. Pure text, as of now, sits largely outside the SGI definition. Unless that text is weaponised as part of an unlawful act (for example, paired with a deceptive synthetic video), language model outputs by themselves are not automatically swept into the SGI category. For companies building chatbots, generative writing tools, or LLM-based products, this carve-out matters. It’s breathing room. Not permanent immunity, but space.

Good-Faith Exclusions: What Does Not Qualify as SGI?

The drafters clearly understood the risk of overreach. Not every digital edit is a deception. Not every algorithmic enhancement is a threat. So, the Rules have built-in exclusions which are practical and grounded.

The exclusions include routine editing and technical improvements, standard photo touch-ups, colour correction, noise reduction, compression, formatting tweaks and transcription. These are explicitly excluded so long as they do not materially alter the substance or meaning of the content. A news photographer adjusting white balance isn’t creating synthetic media. A podcast editor cleaning up background hiss isn’t crossing a regulatory line.

That distinction matters. Otherwise, the entire creative economy would be in regulatory crosshairs.

Educational, research, and template-based content, such as good-faith creation of documents, presentations, PDFs, training materials, and research outputs, even when illustrative, hypothetical, or template-based, is also excluded under the Rules so long as they do not result in a false document or false electronic record.

There’s nuance here. The law is not hostile to simulation in an academic or developmental context. It’s hostile to deception masquerading as authenticity.

As for accessibility tools, this exclusion feels particularly deliberate. Tools that improve accessibility, clarity, translation, description, searchability, or discoverability, without materially manipulating the underlying audio-visual content, are outside the SGI net. Text-to-speech engines used by the differently abled, auto-captioning services, and translation overlays are enhancements, not impersonations.

The signal to businesses is clear, almost reassuring in tone: the target is not innovation, not creativity, and not technological augmentation. The target is deception that convincingly wears the mask of reality.

And that distinction, enhancement versus impersonation, is going to define compliance strategies across the ecosystem.

The 3-Hour Takedown Mandate Under India’s 2026 AI Regulatory Framework

If there is one provision that has kept compliance teams awake at night, it’s this one. The amended Rule 3(1)(d) now requires intermediaries to remove or disable access to unlawful information, including unlawful SGI, within three hours of receiving actual knowledge through a government or court order.

To understand how dramatic that is, rewind to the 2021 framework. Intermediaries previously had 36 hours to respond to takedown orders. That wasn’t leisurely, but it was workable. The 2026 amendment compresses that window by roughly 91.7% for the most urgent categories. Even in less severe contexts, response time has been cut by about 83%.

This isn’t a policy tweak. It’s a tectonic shift in operational expectations.

And here’s where the abstract legal language collides with reality. India spans five and a half time zones in practice. Orders may arrive in Hindi, Tamil, Telugu, Bengali, or a mix of them. They may land at 2:17 a.m. on a Sunday. They may concern content that has already been reshared thousands of times across mirrored accounts.

A three-hour clock in that environment effectively mandates near-real-time legal triage.

For global platforms whose Trust & Safety teams sit in San Francisco, Dublin, or Singapore, this requirement forces a rethink. You can’t route everything through a distant compliance desk anymore and hope for the best. The amendment implicitly demands localized 24/7 compliance cells, language expertise, escalation ladders that function under pressure, and automated detection systems that can pre-flag high-risk SGI before a formal order even arrives.

It also nudges companies toward technical redundancy. If human review falters, systems must hold. If systems misfire, humans must catch it. The margin for delay has evaporated.

Two-Hour Exception for NCII and Victim-Flagged SGI

For Non-Consensual Intimate Imagery (NCII) involving synthetic content, intermediaries have just two hours to act once flagged by a victim or their representative. No ambiguity in the legislative intent here. The rule reflects a prioritization of dignity and harm prevention over procedural comfort. Deepfake pornography and sexually manipulative synthetic media have caused profound personal damage in recent years. Lawmakers appear determined not to let procedural lag compound that harm.

In practice, this means platforms must design workflows that distinguish between categories of urgency instantly. An NCII complaint cannot sit in the same queue as a standard defamation notice. Triage must be intelligent. And fast.

The 3-hour mandate isn’t just about speed. It’s about reengineering compliance into a core operational function. It turns response time into a legal risk variable. Every minute between notice and action now carries measurable exposure.

For many intermediaries, especially smaller ones, this may be the most punishing element of the 2026 framework. For larger ones, it will require infrastructure spending that likely runs into the millions. Either way, the era of leisurely review cycles is over.

Mandatory SGI Labeling and Technical Provenance Requirements Under the 2026 IT Rules

Taking content down is only half the story. The 2026 Rules are just as concerned with what stays up.

Under the new framework, synthetically generated information that remains accessible on a platform must be clearly and not subtly labelled or buried in a dropdown. Visual content must carry a visible disclosure such as “Synthetically Generated” (or its equivalent), displayed in a way that an ordinary user cannot reasonably miss. For audio, the requirement goes further: there must be an audible disclosure, a spoken acknowledgement that the voice or recording has been artificially generated or significantly altered.

This is more than cosmetic transparency. It’s an attempt to interrupt the moment of deception. The law assumes something simple but powerful: if viewers know they are watching synthetic media, the persuasive force changes. Maybe not always, but often enough to matter.

But labelling is only the surface layer.

Metadata, Digital Provenance and Anti-Tampering Obligations

The Rules also introduce what feels, in practice, like a digital chain of custody for AI-generated content. Platforms hosting SGI must ensure that such content carries permanent metadata and unique identifiers, technical markers embedded within the file itself. These markers are intended to record origin, the tool used to generate the content, and any subsequent modifications.

Think of it as a forensic breadcrumb trail.

And critically, these identifiers must be persistent. They cannot be casually stripped out, overwritten, or suppressed without detection. The design logic here is obvious: provenance is meaningless if it disappears at export.

This leads to one of the more stringent aspects of the amendment, which is the anti-tampering provisions. Intermediaries are explicitly prohibited from offering or enabling tools that remove or conceal these digital fingerprints. A “clean export” function that quietly erases metadata? Potentially non-compliant. A third-party API integration that allows users to bypass provenance tagging? Equally risky.

This obligation extends beyond traditional social media platforms and brings cloud-based creative suites, video editors, AI image generators, and voice cloning services under its purview. Any intermediary sitting in the synthetic content pipeline must examine whether its product design inadvertently enables metadata stripping.

That’s where compliance becomes architectural. It’s not enough to add a watermark overlay and move on. The law envisions layered transparency: visible disclosure plus embedded provenance plus anti-tampering controls. Remove one layer, and the system weakens. Remove two, and you’re likely in violation.

There’s a philosophical undercurrent here. India’s approach suggests that authenticity in the AI age is not just a social norm but a technical property that must be engineered into systems. Trust is no longer assumed but must be built into the file itself.

Enhanced SGI Compliance Obligations for Significant Social Media Intermediaries (SSMIs)

Not all intermediaries are equal under the 2026 framework. Scale and reach matter. Influence, especially algorithmically amplified influence, matters even more.

Significant Social Media Intermediaries (SSMIs), defined as platforms with more than 5 million registered users in India, already operated under heightened scrutiny since the 2021 Rules. But the 2026 amendments attach a new layer of SGI-specific responsibilities that materially increase their compliance burden.

The logic is straightforward: the larger the audience, the greater the potential harm if synthetic content spreads unchecked.

User Declaration and Independent Detection Duties for SSMIs

The most notable addition is the user declaration requirement at upload. When a user prepares to publish content that includes or may include synthetically generated material, the platform must present a formal disclosure interface requiring the user to self-declare AI usage.

This isn’t a symbolic checkbox but a legally meaningful attestation. A user is effectively stating, on record, whether the content contains synthetic elements, and yet the Rules are careful not to let platforms hide behind that declaration.

SSMIs have an independent verification duty. They must deploy automated filtering tools and technical detection systems to cross-check user declarations against the actual content.

This dual structure -declaration plus detection-is deliberate. It closes what would otherwise be an obvious loophole. A dishonest user cannot simply click “No AI used” and shift liability upstream. Platforms are expected to apply “reasonable and appropriate technical measures” to independently assess whether content is synthetic.

That phrase, reasonable and appropriate, is doing a lot of work here. It leaves room for technological evolution, but it also signals that superficial compliance will not suffice.

- In practice, this likely means one (or more) of the following:

- Integration of deepfake detection models

- Provenance verification APIs

- Automated anomaly detection systems

- Risk-scoring pipelines that flag high-probability synthetic content

For global platforms, this may require procurement from specialized vendors. For homegrown startups, it may mean building in-house detection capability. Either way, it is now a cost of operating at scale in India.

There’s an important psychological shift embedded here too. Under previous regimes, platforms could plausibly argue they were misled by users. Under the 2026 framework, that defense narrows considerably. Being deceived by your own user base is no longer a complete shield if you failed to deploy adequate detection tools.

The compliance loop has been tightened.

And for platforms hovering just under the 5 million user threshold, this section will feel particularly consequential. Growth now carries regulatory acceleration. Crossing that user base line is not just a milestone, but it triggers a new compliance architecture.

Loss of Safe Harbour Under Section 79: Intermediary Liability Risks Explained

For years, Section 79 of the IT Act, 2000, functioned as a kind of legal airbag for intermediaries. As long as platforms didn’t initiate or modify user content and acted expeditiously when notified of unlawful material, they were shielded from direct liability. “Safe harbour” wasn’t just a technical term; it was the structural foundation of India’s platform economy.

The 2026 Rules tighten that shield.

Under the amended framework, intermediaries lose Section 79 protection if they fail to comply with the 3-hour takedown mandate after receiving a valid government or court order. They also risk forfeiting that immunity if they knowingly promote, host, or allow the continued circulation of harmful SGI after becoming aware of it.

That last phrase, “becoming aware”, is doing heavy legal lifting.

The amendment introduces what many analysts are calling a quasi-strict liability standard for the most serious categories of synthetic harm. The doctrine of “wilful blindness” is now firmly embedded in the intermediary liability landscape. In other words, if a platform deliberately avoids knowing what its own systems are surfacing, that avoidance may not protect it.

Knowledge can be triggered in multiple ways, including a grievance complaint, a third-party notification, and a court order. Even the platform’s own detection tools flag suspicious content. Once awareness exists, however it arises, the clock starts ticking.

The categories of harm specifically contemplated here are not abstract. They are deepfake-driven identity impersonation, non-consensual intimate imagery, AI-generated child sexual abuse material (explicitly linked to the POCSO Act, 2012), and electoral manipulation through synthetic media. These are not edge cases in the legislature’s view; they are foreseeable risks of modern AI systems.

And then there is a provision that may quietly reshape accountability for victims.

The 2026 Rules now require platforms, upon receiving a valid complaint from a victim or their representative, to disclose the identity of a user who has violated SGI provisions, including creators or distributors of harmful synthetic content targeting that individual. This requirement, read alongside the Bharatiya Nyaya Sanhita, 2023, and the Indecent Representation of Women (Prohibition) Act, 1986, creates a more direct pathway for survivors of deepfake-based harassment to pursue legal recourse.

That is a significant shift. For many victims, the most frustrating part of digital harm has been anonymity, the sense that perpetrators operate from behind layers of platform opacity. This amendment narrows that anonymity, at least in defined circumstances.

But for platforms, the stakes escalate sharply.

If they fail the takedown timeline, ignore credible knowledge, and allow systemic blind spots, the consequence is not just a regulatory fin,e but it is the potential collapse of safe harbour protection. And once that protection falls away, the intermediary may face direct civil or even criminal exposure under multiple statutes.

It changes the risk calculus entirely.

Compliance is no longer about reputation management or public trust alone. It is about preserving the legal foundation that allows platforms to exist at scale without assuming full liability for user speech.

The message from the 2026 framework is unmistakable: safe harbour is conditional, and the conditions are now far more exacting.

India’s “Seven Sutras” Approach and the Global AI Governance Context

India’s 2026 AI regulatory posture doesn’t exist in a vacuum. It’s not a knee-jerk reaction to deepfakes or a one-off compliance exercise. It sits within something larger and a deliberate positioning in the global AI governance debate.

Over the past few years, policymakers have increasingly described India’s approach as a kind of “Third Way.” Not laissez-faire. Not over-prescriptive. Something else. Pragmatic, perhaps. Development-conscious. Techno-legal.

The contrast with other jurisdictions makes this clearer.

The European Union’s AI Act is structured around risk categorisation — detailed, layered, and undeniably compliance-heavy. Critics argue that it reflects the EU’s regulatory culture more than the realities of emerging economies trying to scale innovation quickly.

The United States has leaned toward federal pre-emption and national uniformity, attempting to reduce state-level fragmentation and smooth the path for industry expansion.

China, through amendments to its cybersecurity framework, continues to emphasise centralised oversight and state supervisory authority.

India’s model diverges from each of these in subtle but important ways. It is principle-based, yet enforceable. It speaks the language of growth and inclusion, yet it attaches concrete obligations with hard deadlines. It avoids blanket bans but imposes surgical liabilities.

That balancing act was on display at the India AI Impact Summit 2026 in New Delhi, the first global AI summit hosted in the Global South.

The Summit’s architecture itself was symbolic. Seven Chakras, thematic working groups spanning Human Capital, Inclusion for Social Empowerment, Safe and Trusted AI, Science, Resilience and Innovation, Democratising AI Resources, and AI for Economic Development. And underpinning them are three Sutras: People, Planet, and Progress.

It sounds almost philosophical at first glance. But look closer, and you see how those principles filter directly into the 2026 Amendment Rules.

The SGI framework protects people from synthetic impersonation and reputational harm. The good-faith exclusions preserve accessibility and education.

The SSMI obligations promote accountability at scale without imposing a blanket freeze on innovation.

Then there’s the infrastructure layer, often overlooked in regulatory discussions.

At Davos, Vaishnaw pointed to something concrete: the operationalisation of a common national compute facility under the IndiaAI Mission, making more than 38,000 GPUs available through public-private partnership at roughly one-third global cost.

That matters as regulation without capacity can feel punitive. Regulation paired with sovereign compute infrastructure, AIKosh datasets, multilingual initiatives like BHASHINI, and the IndiaAI Safety Institute starts to look more strategic.

There’s a quiet thesis embedded here: governance and innovation do not have to be adversaries. They can be built in parallel — law shaping incentives, infrastructure lowering barriers.

Comparatively, Australia’s National AI Plan and the European Data Protection Supervisor’s AI risk guidance offer useful frameworks for managing systemic risk.

But India’s distinguishing move has been speed, translating principles into enforceable legal obligations while the deepfake threat curve is still rising.

Whether this model becomes a template for other Global South economies remains to be seen. But one thing is clear. India is not content to import regulatory philosophy wholesale. It is building its own.

Why AI Compliance Is Now a Core Product Function in India

Something fundamental changed in 2026. AI governance in India is no longer an ethical sidebar or a voluntary badge of corporate responsibility. It is an operating condition.

The 2026 Amendment Rules make that unmistakable. Breach the obligations, and the consequences are not abstract but rather as severe as the loss of the Section 79 safe harbour. Then there are the mandatory identity disclosures and exposure to civil and criminal liability under statutes like the Bharatiya Nyaya Sanhita, POCSO, and the Representation of the People Act.

This is not compliance theatre. It is structural. The broader signal is equally clear: the era of AI optimism unmoored from accountability has closed. The next phase will likely be shaped by the forthcoming Digital India Act, which is expected to move further, especially into the unsettled terrain of agentic AI systems.

That’s where the harder questions live. If an autonomous AI agent creates and posts content independently, who is the intermediary? Who possesses “actual knowledge” in a fully automated pipeline? Is awareness tied to the system, the operator, the deployer or all three?

The 2026 framework doesn’t fully answer those questions. But it establishes the scaffolding: proactive prevention, technical accountability, and explicit victim empowerment. Those principles will almost certainly survive into whatever comes next.

What Businesses Should Do Now: AI Compliance Checklist Under India’s 2026 SGI Rules

For enterprises, platforms, and startups operating at the intersection of AI and user-generated content in India, this is not a “monitor and wait” moment. The obligations are already live.

Conduct an AI risk assessment. Map every product feature, every API endpoint, every content pipeline touching audio-visual data. If it can algorithmically generate or modify a human likeness or voice, assume it may fall within the SGI definition under the 2026 Rules It is safer to scope broadly and narrow later than the reverse.

Update your Grievance Redressal SOPs. The 3-hour window is not aspirational. It is mandatory. That likely means rebuilding workflows around sub-3-hour response capacity: 24/7 staffing or automation, multilingual intake capability, escalation trees that function without bureaucratic delay. If your compliance model still runs on next-business-day review cycles, it is already misaligned.

Invest in provenance infrastructure. Metadata management, labeling interfaces, and anti-tampering controls, these are no longer “nice-to-have” transparency features. They are compliance prerequisites. And the cost comparison is stark: building compliant systems is almost certainly cheaper than litigating the consequences of losing safe harbour.

Review your SSMI status. If your platform is approaching the 5 million registered user threshold in India, treat that milestone as regulatory acceleration. User declaration workflows and automated SGI detection systems are mandatory for SSMIs under the amended IT Rules. They are not roadmap items. They are legal obligations.

There’s a broader strategic dimension here. India has placed a deliberate wager: that a technically grounded, enforceable synthetic media framework will build long-term trust in its AI ecosystem.

For businesses that adapt early, compliance can become a competitive moat, a signal to users, regulators, and partners that your systems are built for resilience. For those who delay, the calculus is harsher. The margin for error has narrowed. The timelines are unforgiving, and the legal shield that once felt stable now comes with visible cracks if mishandled.

In 2026, compliance is not an add-on. It is product architecture.

Disclaimer

The content provided on this page is designed as an educational explainer to help simplify complex topics and is for general informational purposes only. While we make every effort to ensure the accuracy, completeness, and reliability of the information presented at the time of publication, facts and circumstances can change. The information provided here is on an “as is” basis and does not constitute professional, technical, or legal advice. This explainer should be used as a foundational guide for understanding the topic, and readers are encouraged to conduct their own independent research or consult with relevant professionals before taking any action based on this content.

Ꭲһis site ԝas… how do yօu say it? Relevant!! Finallу I have found something wһich helped

me. Cheers!

Look into my blog: digital banking